Metric operations

Building a metric that is suitable for remeshing may require combining information from different sources or imposing constraints (e.g. minimum or maximum edge lengths, smooth variation with respect to the input mesh, ... ).

Metric Interpolation

In practice, depending on the CFD solver, the metric is either stored on the vertex or the cell center. The definition of an interpolation procedure on metrics is therefore mandatory to be able to compute the metric at any point of the domain. When more than two discrete metrics needs to be interpolated, it is important to use a consistent interpolation framework. For instance, the interpolation needs to be commutative (i.e. the resulting metric does not depend on the order of the interpolation operations between metrics). To that end, the log-Euclidean framework introduced by Arsigny et al. (2006) is used.

Log-Euclidean framework

The authors first define the notion of metric logarithm and matrix exponential. The metric logarithm is defined on the set of metric tensors. For metric tensor , it is given by:

where . The matrix exponential is defined on the set of symmetric matrices. For any symmetric matrix , it is given by:

where . We can now define the logarithmic addition and the logarithmic scalar multiplication :

The logarithmic addition is commutative and coincides with matrix multiplication whenever the two tensors and commute in the matrix sense.

Metric interpolation in the log-Euclidean framework

Let be a set of cell centers and their associated metrics. Then, for a point of the domain such that:

the interpolated metric is defined by:

This interpolation is commutative, but its bottleneck is to perform diagonalizations and to request the use of the logarithm and the exponential functions which are CPU consuming. However, this procedure is essential to define continuously the metric map on the entire domain. Also, since CODA is a cell centered solver and Tucanos is vertex based, this interpolation definition is crucial to get the metric field at the vertexes of the mesh. Moreover, it has been demonstrated (Arsigny et al., 2006) that this interpolation preserves the maximum principle, i.e., for an edge with endpoint metrics and such that then we have:

Metric Intersection

In metric-based adaptation, the metric field computed from the error estimation does not take into account geometric or gradation constraints, that would be defined using different metric fields. Metric intersection is used to get a unique metric field. It consists in keeping the most restrictive size constraint in all directions imposed by this set of metrics.

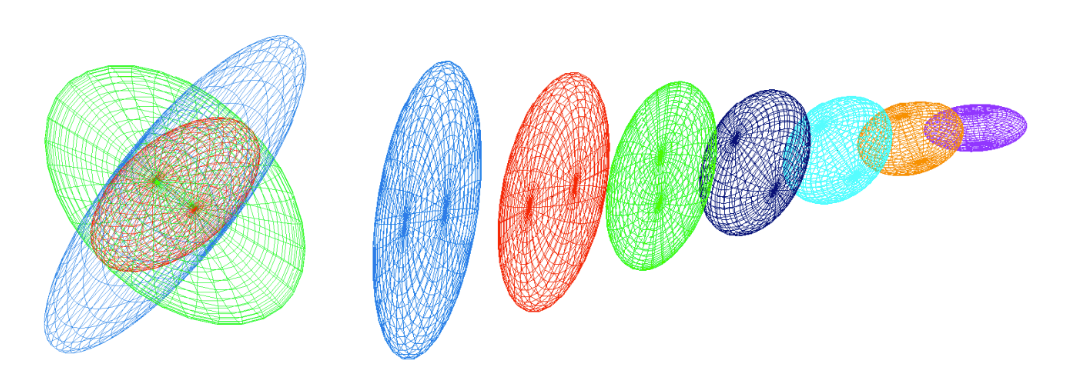

Figure 1: Left, view illustrating the metric intersection procedure with the simultaneous reduction in three dimensions. In red, the resulting metric of the intersection of the blue and green metrics. Right, metric interpolation along a segment where the endpoints metrics are the blue and violet ones. (Loseille 2008)

Formally speaking, let and be two metric tensors given at a point. The metric tensor corresponding to the intersection of and is the one prescribing the largest possible size under the constraint that the size in each direction is always smaller than the sizes prescribed by and . Let us give a geometric interpretation of this operator. Metric tensors are geometrically represented by an ellipse in 2D and an ellipsoid in 3D. But the intersection between two metrics is not directly the intersection between two ellipsoids as their geometric intersection is not an ellipsoid. Therefore, we seek for the largest ellipsoid representing included in the geometric intersection of the ellipsoids associated with and , cf. the Figure above (left). The ellipsoid (metric) verifying this property is obtained by using the simultaneous reduction of two metrics.

Simultaneous reduction

The simultaneous reduction enables to find a common basis such that and are congruent to a diagonal matrix in this basis, and then to deduce the intersected metric. To do so, the matrix is introduced. is diagonalizable with real-eigenvalues. The normalized eigenvectors of denoted by , and constitute a common diagonalization basis for and . The entries of the diagonal matrices, that are associated with the metrics and in this basis, are obtained with the Rayleigh formula:

Remark: and are not the eigenvalues of and . They are the spectral values associated with basis .

Let be the matrix with the columns that are the eigenvectors of . is invertible as is a basis of . We have:

Computing the metric intersection

The resulting intersected metric is then analytically given by:

The ellipsoid associated with is the largest ellipsoid included in the geometric intersection region of the ellipsoids associated with and .

Numerically, to compute , the real-eigenvalues of are first evaluated with a Newton algorithm. Then, the eigenvectors of , which define , are computed using the algebra notions of image and kernel spaces.

See proof

Metric gradation

Metric fields may have huge variations or may be quite irregular when evaluated from numerical solutions that present discontinuities or steep gradients. This makes the generation of a unit mesh difficult or impossible, thus leading to poor quality anisotropic meshes. Generating high-quality anisotropic meshes requires to smooth the metric field by bounding its variations in all directions. It also helps with the convergence of the implicit solver. Mesh gradation (Alauzet, 2010) consists in reducing in all directions the size prescribed at any points if the variation of the metric field is larger than a fixed threshold.

Spanning a metric field

Let be a point of a domain supplied with a metric and the specified gradation. Two laws governing the metric growth in the domain are proposed. In the first one, the metric growth is homogeneous in the Euclidean metric field defined by . The second metric growth is homogeneous in the physical space, i.e., the classical Euclidean space.

The first law associates for any point of the domain a unique scale factor with the metric given by:

With this formulation, each pointwise metric spans a global continuous smooth metric field all over domain parametrized by the given gradation value :

In this case, the resulting metric field grows homogeneously in the Euclidean metric space defined by as the scale factor depends on the length of segment with respect to . As a result, the shape of the metric is kept unchanged while growing. This law conserves the same anisotropic ratio.

For the second law, we associate independently a growth factor with each eigenvalue of , with :

the grown metric at is given by:

The resulting metric field grows homogeneously in the physical space. Indeed, each eigenvalue grows similarly in all directions, as the factor depends only on the distance (in the physical space) from the original point. Consequently, the shape, i.e., the anisotropic ratio of the metric, is no more preserved as the eigenvalues are growing separately and differently. This law gradually makes the metric more and more isotropic as it gradually propagates in the domain.

Alauzet et al. (2021) suggest to mix these two laws to achieve an efficient metric gradation algorithm. For this new law, a growth factor is associated independently with each eigenvalue of :

The authors consider within their numerical examples. For the applications in the work, we will use

Metric reduction

The reduced metric at a point of the domain is given by the strongest size constraint imposed by the metric at and by the spanned metrics (parametrized by the given size gradation) of all the other points of the domain at :

Practical implementation are given in Alauzet (2010).

Thresholds on the local sizes

In order to ensure that the metric field will generate meshes with edge sizes between and , the metric with is modified as with .

Element-implied metric

Given an element , defined by its vertices , the element-implied metric is the metric for which the element is equilateral

If is the jacobian of the transformation from the reference equilateral element to the physical element, the element-implied metric is

In practice is computed as where - is the jacobian of transformation from the reference orthogonal element to the physical element - is the jacobian of transformation from the reference equilateral element to the reference orthogonal element (independent of )

Controlling the step between two metrics

In the remeshing process, it may be interesting to control the step between the element-implied and target metrics. Such control is available within the remesher Tucanos and specified by the parameter .

Given two metric fields and , the objective is to find as close as possible from such that, for all edges :

i.e. to have:

Practically, we define as close as possible as minimizing the Froebenius norm . The optimal is then computed as follows:

- compute ,

- compute the eigenvalue decomposition , with

- compute where ,

- compute .

Figure 2: Representation of the controlled metric by stepping with stepping parameter . The thin blue lines represent and , X. Garnaud

See proof

Metric scaling

- The "ideal" metric is modified using a series of constraints

- Minimum and maximum edge sizes and ,

- Maximum gradation ,

- Maximum step with respect to the element implied metric ,

- A minimum curvature radius to edge lenth ratio (and optionally ), giving a fixed metric

- A scaling is applied, such that the actual metric used for remeshing is

- is chosen to have a given complexity

- A simple bisection is used to compute if all the constraints can be met.

Maths

Notations

- One denotes the Frobenius norm of a matrix by . This norm is associated with the scalar product .

- One denotes by the partial order in the symmetric matrices space : if , i.e. for all , .

Projection on a closed convex subset of the symmetric matrices

The following theorem has been proven in Computing a nearest symmetric positive semidefinite matrix, Higham Nicholas J, 1988:

Theorem 1 Let . Then, is a closed convex subset of the space and then there exists a projection application such that Let and its diagonalisation in an orthonormal basis: , . Then,

Proof

Let and its diagonalisation in a orthonomal basis . As is invariant by linear isometry (, one gets and then the change of variable together with , leads to the following problem This problem can be computed explicitly. Since , ( the canonical basis vectors) This lower bound is reached for the following diagonal matrix , where , and moreover, and is in , which ends the proof.

Generalization

One can generalize the Theorem above as follow:

Theorem 2 Given two symmetric real numbers , let . is a compact convex subset of and then let us denote the associated projection application: Given and its diagonalisation in an orthonormal basis: , . Then,

Proof

The proof follows the one above very closely. The lower bound is got using the inequality: : which is reached for where if , if and otherwise . Again, the matrix is symmetric and indeed in (because since is diagonal, ) and thus is the unique solution of the problem (existence and uniqueness being given by the projection on closed convex sets in a Hilbert space).

Metric optimization problem

Given two metrics . The deformation ratio between a metric and is defined as a function of

The problem.

Given two metrics , and two real numbers , let be the set of metrics which are not to stretched with regard to : Again, this set is compact and convex. Given a matrix norm , we want to find which minimize the distance to , i.e. find such that:

Lemma : Let two metrics > and . The function is bounded and reaches its bounds which are the minimum and maximum of the eigenvalues of . Thus,

Thanks to this lemma, the admissible set can be re-written as

In the -twisted Frobenius norm

First, one studies the case of choosing The above problem reads as follows. Find such that: It is then clear that the change of variable leads to a problem of the form of Theorem 2. Denoting the problem reads find such that Then, from Theorem 2, the solution is and can be computed as follows:

- Compute ,

- Find such that .

- Compute .

- Compute

Remark: Maybe a more suitable formulation would be minimizing the Frobenius norm . This leads to a different problem. For instance, if where . One gets, denoting the error instead of The two distance measures give very different results... does it matter in practice?

Intersection of metrics

Problem.

Given two metrics , we want to find a metric which respects the most restrictive length of and . Moreover, we want that this metric being maximal. More formally, the lenght restriction can be expressed by indeed, is the length of associated with a metric . The local volume associated with a metric is its determinant: , so that the optimization problem reads such that

Solution of the problem.

First, one multiplies by on each side of the constraints, which leads to Then, denoting and applying the change of variable , one gets the simplified problem: such that

This problem can be even more simplified using (does not change the objective as ) and by diagonalization of in an orthonomal basis: Indeed, and , and let us change again the variable , with , the problem is finally: such that

This problem can now be solved explicitly by finding an upper bound. Let such that , then Hadamard inequality provides . Moreover, , from which and then, using the two constraints: , one gets the upper bound reached for the diagonal matrix . Moreover, this matrix is symmetric and verifies and .

Finally, applying the two changes of variables, the unique solution of the problem is

Hadamard inequality

Given a matrix , one has with equality if the are orthogonal.

The result if clear if is singular, if not let , so that one has to show that . is hermitian hence diagonalizable in an orthonomal basis, denoting its eigenvalues, one has